Go Scheduled Task Monitoring Guide

Go is popular for backend services with scheduled tasks. A crashed binary exits without notice, a hung process stays stuck forever. Here's how to monitor them.

Go Scheduled Task Monitoring Guide

Go is increasingly popular for building backend services and command-line tools, many of which rely on scheduled tasks. Whether you run Go binaries via system cron or use libraries like robfig/cron for in-process scheduling, these jobs share a common problem: they fail silently. A crashed binary exits without notice, and a hung process stays stuck forever.

This guide covers monitoring patterns for Go scheduled tasks, from simple cron scripts to sophisticated job schedulers. For foundational concepts, check out our complete guide to cron job monitoring.

Go Scheduling Options

Go applications handle scheduled tasks in several ways.

System Cron with Go Binaries

The simplest approach: compile a Go program and schedule it with system cron. The binary runs, does its work, and exits.

# crontab

0 0 * * * /usr/local/bin/daily-reportrobfig/cron Library

The most popular Go scheduling library. Jobs run within your Go process on a cron schedule.

import "github.com/robfig/cron/v3"

c := cron.New()

c.AddFunc("0 0 * * *", dailyJob)

c.Start()go-co-op/gocron

A newer library with a fluent API and built-in monitoring support. gocron v2 (6.9k GitHub stars) includes a SchedulerMonitor interface for Prometheus integration and a companion web dashboard called gocron-ui for real-time job visualization.

import "github.com/go-co-op/gocron/v2"

s, _ := gocron.NewScheduler()

s.NewJob(

gocron.DailyJob(1, gocron.NewAtTimes(gocron.NewAtTime(0, 0, 0))),

gocron.NewTask(dailyJob),

)

s.Start()Built-in time.Ticker

For simple periodic tasks within a long-running application:

ticker := time.NewTicker(1 * time.Hour)

for range ticker.C {

hourlyJob()

}Monitoring System Cron Go Programs

Let's start with the most common pattern: a Go binary scheduled via system cron.

Basic monitored program:

package main

import (

"fmt"

"log"

"net/http"

"os"

"time"

)

const monitorURL = "https://ping.example.com/abc123"

var client = &http.Client{

Timeout: 10 * time.Second,

}

func ping(endpoint string) {

url := monitorURL + endpoint

resp, err := client.Get(url)

if err != nil {

log.Printf("Monitor ping failed: %v", err)

return

}

defer resp.Body.Close()

}

func main() {

// Signal start

ping("/start")

if err := processData(); err != nil {

// Signal failure

ping("/fail")

log.Fatal(err)

}

// Signal success

ping("")

fmt.Println("Job completed successfully")

}

func processData() error {

// Your job logic here

fmt.Println("Processing data...")

return nil

}Key points:

- Create a shared HTTP client with a timeout

- Signal start before any processing

- Signal failure before calling

log.Fatal(which exits immediately) - Signal success only after all work completes

Monitoring robfig/cron Jobs

For in-process scheduling with robfig/cron, wrap your job functions with monitoring.

package main

import (

"log"

"net/http"

"os"

"time"

"github.com/robfig/cron/v3"

)

var client = &http.Client{

Timeout: 10 * time.Second,

}

var monitors = map[string]string{

"daily-report": os.Getenv("MONITOR_DAILY_REPORT"),

"hourly-sync": os.Getenv("MONITOR_HOURLY_SYNC"),

"cleanup": os.Getenv("MONITOR_CLEANUP"),

}

func ping(monitorURL, endpoint string) {

if monitorURL == "" {

return

}

resp, err := client.Get(monitorURL + endpoint)

if err != nil {

log.Printf("Monitor ping failed: %v", err)

return

}

defer resp.Body.Close()

}

func monitoredJob(name string, job func() error) func() {

return func() {

url := monitors[name]

ping(url, "/start")

if err := job(); err != nil {

log.Printf("Job %s failed: %v", name, err)

ping(url, "/fail")

return

}

ping(url, "")

}

}

func dailyReport() error {

log.Println("Generating daily report...")

// Report generation logic

return nil

}

func hourlySync() error {

log.Println("Syncing data...")

// Sync logic

return nil

}

func cleanup() error {

log.Println("Running cleanup...")

// Cleanup logic

return nil

}

func main() {

c := cron.New()

c.AddFunc("0 0 * * *", monitoredJob("daily-report", dailyReport))

c.AddFunc("0 * * * *", monitoredJob("hourly-sync", hourlySync))

c.AddFunc("0 3 * * *", monitoredJob("cleanup", cleanup))

c.Start()

// Block forever (or until signal)

select {}

}Creating a Reusable Wrapper

For cleaner code, create a reusable monitoring wrapper.

package monitor

import (

"fmt"

"net/http"

"net/url"

"os"

"time"

)

// Monitor handles job monitoring pings

type Monitor struct {

BaseURL string

Client *http.Client

}

// New creates a Monitor from an environment variable

func New(envVar string) *Monitor {

return &Monitor{

BaseURL: os.Getenv(envVar),

Client: &http.Client{

Timeout: 10 * time.Second,

},

}

}

// NewWithURL creates a Monitor with a direct URL

func NewWithURL(baseURL string) *Monitor {

return &Monitor{

BaseURL: baseURL,

Client: &http.Client{

Timeout: 10 * time.Second,

},

}

}

// Ping sends a signal to the monitor

func (m *Monitor) Ping(endpoint string) {

if m.BaseURL == "" {

return

}

resp, err := m.Client.Get(m.BaseURL + endpoint)

if err != nil {

return // Silently ignore monitoring failures

}

defer resp.Body.Close()

}

// PingWithError sends a failure signal with error details

func (m *Monitor) PingWithError(err error) {

if m.BaseURL == "" {

return

}

u, parseErr := url.Parse(m.BaseURL + "/fail")

if parseErr != nil {

return

}

q := u.Query()

if err != nil {

msg := err.Error()

if len(msg) > 100 {

msg = msg[:100]

}

q.Set("error", msg)

}

u.RawQuery = q.Encode()

resp, httpErr := m.Client.Get(u.String())

if httpErr != nil {

return

}

defer resp.Body.Close()

}

// Start signals job start

func (m *Monitor) Start() {

m.Ping("/start")

}

// Success signals successful completion

func (m *Monitor) Success() {

m.Ping("")

}

// Fail signals job failure

func (m *Monitor) Fail(err error) {

m.PingWithError(err)

}

// Wrap creates a monitored version of a function

func (m *Monitor) Wrap(fn func() error) func() {

return func() {

m.Start()

if err := fn(); err != nil {

m.Fail(err)

return

}

m.Success()

}

}

// Run executes a function with monitoring

func (m *Monitor) Run(fn func() error) error {

m.Start()

err := fn()

if err != nil {

m.Fail(err)

return err

}

m.Success()

return nil

}Using the wrapper:

package main

import (

"log"

"github.com/robfig/cron/v3"

"myapp/monitor"

)

func main() {

reportMonitor := monitor.New("MONITOR_DAILY_REPORT")

syncMonitor := monitor.New("MONITOR_HOURLY_SYNC")

c := cron.New()

c.AddFunc("0 0 * * *", reportMonitor.Wrap(dailyReport))

c.AddFunc("0 * * * *", syncMonitor.Wrap(hourlySync))

c.Start()

select {}

}

func dailyReport() error {

log.Println("Generating report...")

return nil

}

func hourlySync() error {

log.Println("Syncing data...")

return nil

}For standalone binaries:

package main

import (

"fmt"

"log"

"os"

"myapp/monitor"

)

func main() {

m := monitor.New("MONITOR_DAILY_JOB")

err := m.Run(func() error {

return processData()

})

if err != nil {

log.Fatal(err)

}

fmt.Println("Done!")

}

func processData() error {

// Your job logic

return nil

}HTTP Client Best Practices

Go's default HTTP client has no timeout, which can cause jobs to hang. Always configure a client properly.

package main

import (

"log"

"net/http"

"time"

)

// Create a client with sensible defaults

var client = &http.Client{

Timeout: 10 * time.Second,

Transport: &http.Transport{

MaxIdleConns: 10,

IdleConnTimeout: 30 * time.Second,

DisableCompression: true,

},

}

func ping(url string) {

resp, err := client.Get(url)

if err != nil {

log.Printf("Monitor ping failed: %v", err)

return

}

defer resp.Body.Close()

// Optionally check status code

if resp.StatusCode >= 400 {

log.Printf("Monitor returned status %d", resp.StatusCode)

}

}Passing Job Metadata

Send additional context with your pings for better observability.

package main

import (

"fmt"

"net/http"

"net/url"

"time"

)

var client = &http.Client{Timeout: 10 * time.Second}

func pingWithData(baseURL string, data map[string]string) {

u, err := url.Parse(baseURL)

if err != nil {

return

}

q := u.Query()

for k, v := range data {

q.Set(k, v)

}

u.RawQuery = q.Encode()

resp, err := client.Get(u.String())

if err != nil {

return

}

defer resp.Body.Close()

}

func main() {

monitorURL := "https://ping.example.com/abc123"

// Signal start

pingWithData(monitorURL+"/start", nil)

// Do work

processed, err := processRecords()

if err != nil {

pingWithData(monitorURL+"/fail", map[string]string{

"error": err.Error(),

})

return

}

// Signal success with metadata

pingWithData(monitorURL, map[string]string{

"processed": fmt.Sprintf("%d", processed),

"duration": "45s",

})

}

func processRecords() (int, error) {

// Process logic

return 150, nil

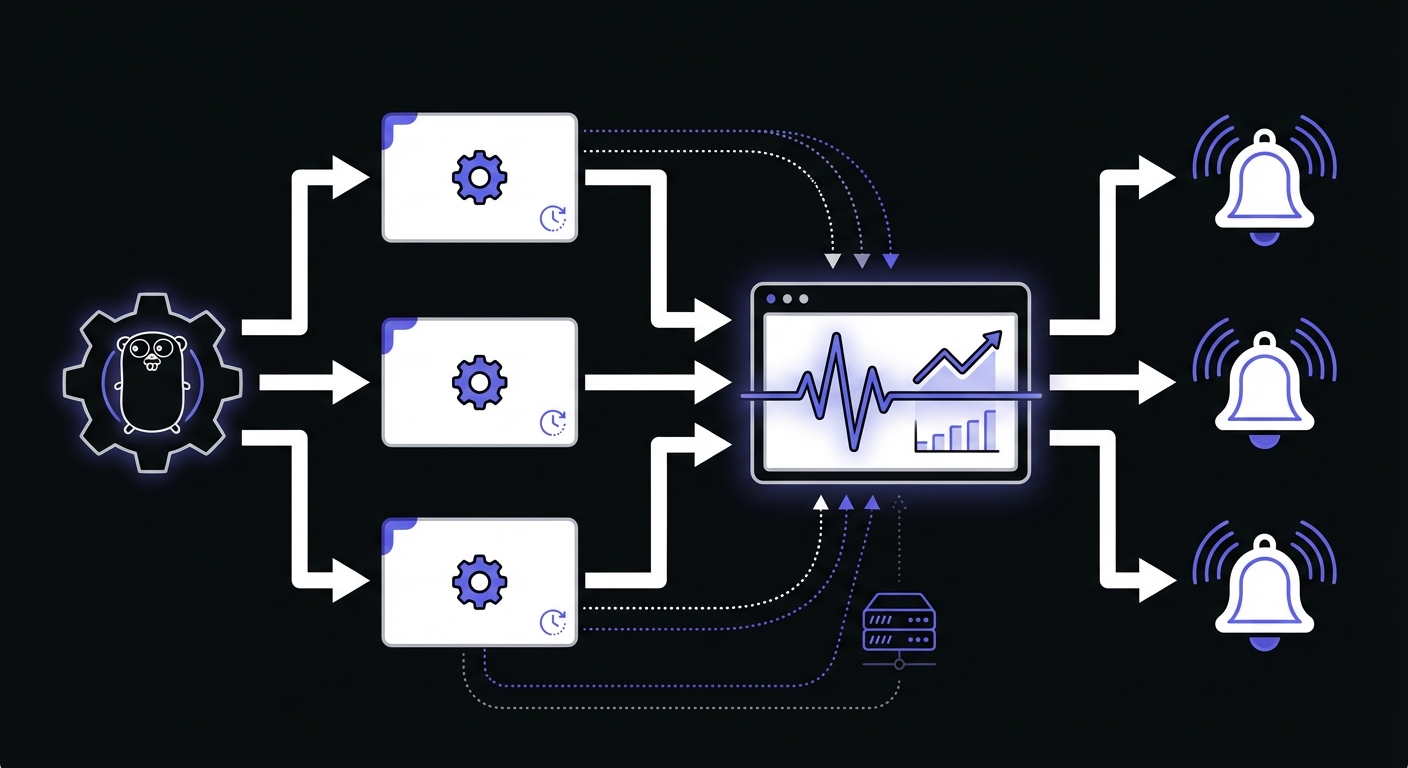

}Prometheus Metrics with gocron

For applications already using Prometheus, gocron v2 provides a SchedulerMonitor interface that exposes job execution metrics directly to your metrics stack.

package main

import (

"time"

"github.com/go-co-op/gocron/v2"

"github.com/prometheus/client_golang/prometheus"

"github.com/prometheus/client_golang/prometheus/promauto"

)

type PrometheusMonitor struct {

jobsCompleted prometheus.Counter

jobsFailed prometheus.Counter

executionTime prometheus.Histogram

schedulingDelay prometheus.Histogram

}

func NewPrometheusMonitor() *PrometheusMonitor {

return &PrometheusMonitor{

jobsCompleted: promauto.NewCounter(prometheus.CounterOpts{

Name: "cron_jobs_completed_total",

Help: "Total number of successfully completed cron jobs",

}),

jobsFailed: promauto.NewCounter(prometheus.CounterOpts{

Name: "cron_jobs_failed_total",

Help: "Total number of failed cron jobs",

}),

executionTime: promauto.NewHistogram(prometheus.HistogramOpts{

Name: "cron_job_execution_seconds",

Help: "Time spent executing cron jobs",

Buckets: []float64{0.1, 0.5, 1, 5, 10, 30, 60, 300},

}),

schedulingDelay: promauto.NewHistogram(prometheus.HistogramOpts{

Name: "cron_job_scheduling_delay_seconds",

Help: "Delay between scheduled and actual start time",

Buckets: []float64{0.001, 0.01, 0.1, 1, 5},

}),

}

}

func (p *PrometheusMonitor) JobCompleted(job gocron.Job) {

p.jobsCompleted.Inc()

}

func (p *PrometheusMonitor) JobFailed(job gocron.Job, err error) {

p.jobsFailed.Inc()

}

func (p *PrometheusMonitor) JobExecutionTime(job gocron.Job, duration time.Duration) {

p.executionTime.Observe(duration.Seconds())

}

func (p *PrometheusMonitor) JobSchedulingDelay(job gocron.Job, scheduled, actual time.Time) {

p.schedulingDelay.Observe(actual.Sub(scheduled).Seconds())

}

// Implement remaining SchedulerMonitor methods...

func main() {

monitor := NewPrometheusMonitor()

s, _ := gocron.NewScheduler(

gocron.WithSchedulerMonitor(monitor),

)

s.NewJob(

gocron.DurationJob(5*time.Minute),

gocron.NewTask(processData),

)

s.Start()

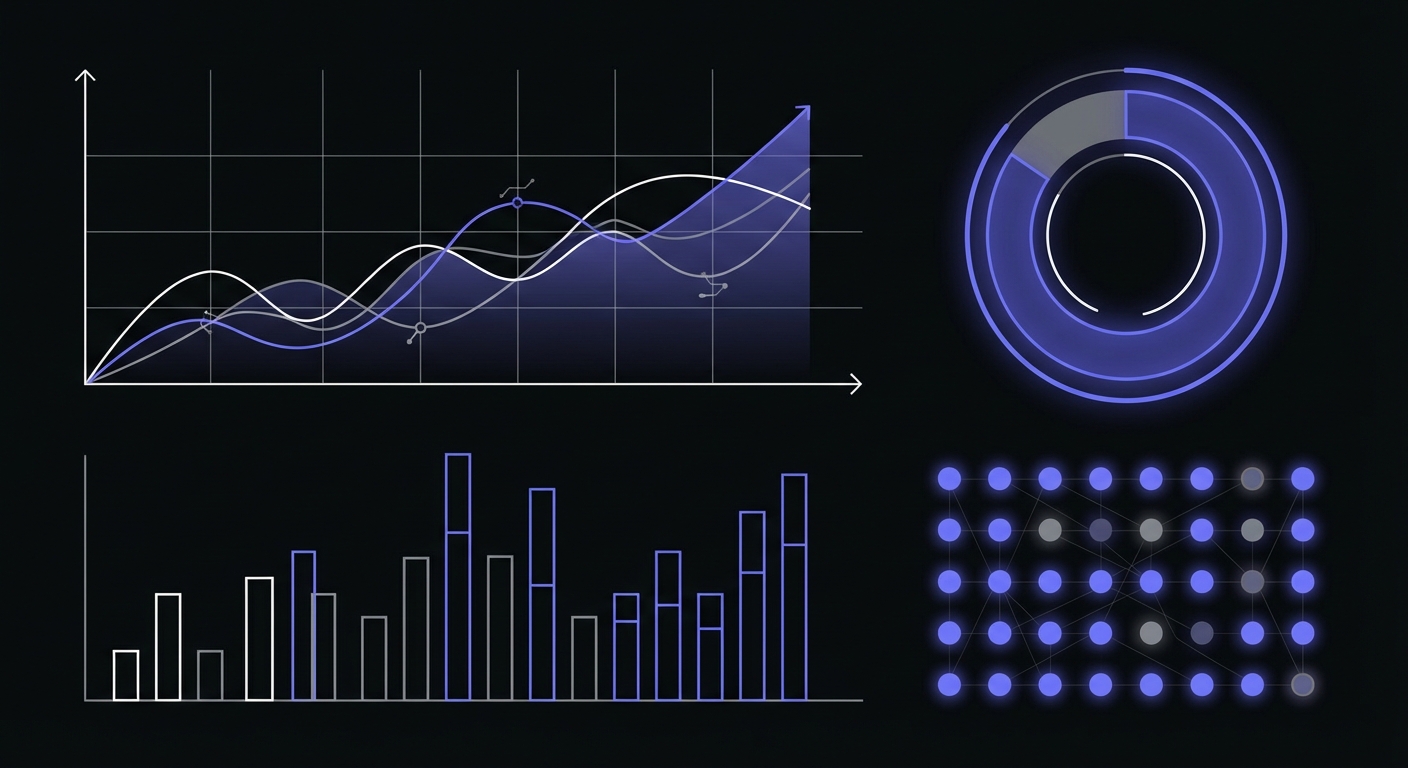

}Key metrics to track:

| Metric | Purpose |

|---|---|

cron_jobs_completed_total | Track successful executions |

cron_jobs_failed_total | Alert on failure spikes |

cron_job_execution_seconds | Detect slow jobs before timeouts |

cron_job_scheduling_delay_seconds | Identify scheduler overload |

Error rate calculation: jobsFailed / (jobsCompleted + jobsFailed)

Retry with Exponential Backoff

Jobs that call external services benefit from retry logic. The cenkalti/backoff library provides exponential backoff with jitter to prevent thundering herd problems.

package main

import (

"context"

"errors"

"log"

"net/http"

"time"

"github.com/cenkalti/backoff/v4"

)

func fetchDataWithRetry(ctx context.Context, url string) ([]byte, error) {

var result []byte

operation := func() error {

req, _ := http.NewRequestWithContext(ctx, "GET", url, nil)

resp, err := http.DefaultClient.Do(req)

if err != nil {

return err // Retryable

}

defer resp.Body.Close()

// Don't retry client errors (4xx)

if resp.StatusCode >= 400 && resp.StatusCode < 500 {

return backoff.Permanent(errors.New("client error"))

}

// Retry server errors (5xx)

if resp.StatusCode >= 500 {

return errors.New("server error")

}

// Read response...

return nil

}

// Exponential backoff: 500ms, 1s, 2s, 4s... up to 30s max

b := backoff.NewExponentialBackOff()

b.MaxElapsedTime = 2 * time.Minute

b.MaxInterval = 30 * time.Second

err := backoff.Retry(operation, backoff.WithContext(b, ctx))

return result, err

}

func monitoredJobWithRetry() error {

ctx, cancel := context.WithTimeout(context.Background(), 5*time.Minute)

defer cancel()

_, err := fetchDataWithRetry(ctx, "https://api.example.com/data")

if err != nil {

log.Printf("Job failed after retries: %v", err)

return err

}

return nil

}Error categorization for retries:

| Error Type | Action | Examples |

|---|---|---|

| Network timeout | Retry with backoff | Connection refused, DNS failure |

| Server error (5xx) | Retry with backoff | 500, 502, 503, 504 |

| Rate limited (429) | Retry with longer delay | Too many requests |

| Client error (4xx) | Do not retry | 400, 401, 403, 404 |

| Permanent failure | Do not retry | Invalid credentials, missing resource |

Go-Specific Considerations

Graceful Shutdown Handling

Long-running schedulers should handle shutdown signals gracefully.

package main

import (

"context"

"log"

"os"

"os/signal"

"syscall"

"time"

"github.com/robfig/cron/v3"

"myapp/monitor"

)

func main() {

c := cron.New()

reportMonitor := monitor.New("MONITOR_DAILY_REPORT")

c.AddFunc("0 0 * * *", reportMonitor.Wrap(dailyReport))

c.Start()

// Handle shutdown signals

quit := make(chan os.Signal, 1)

signal.Notify(quit, syscall.SIGINT, syscall.SIGTERM)

<-quit

log.Println("Shutting down...")

// Stop accepting new jobs

ctx := c.Stop()

// Wait for running jobs to complete (with timeout)

select {

case <-ctx.Done():

log.Println("All jobs completed")

case <-time.After(30 * time.Second):

log.Println("Timeout waiting for jobs")

}

}

func dailyReport() error {

// Report logic

return nil

}Context Cancellation

For jobs that support cancellation, use context.

package main

import (

"context"

"log"

"net/http"

"time"

)

type MonitoredJob struct {

MonitorURL string

Client *http.Client

}

func (m *MonitoredJob) Run(ctx context.Context, fn func(context.Context) error) error {

m.ping("/start")

err := fn(ctx)

if ctx.Err() != nil {

// Job was cancelled

m.ping("/fail?error=cancelled")

return ctx.Err()

}

if err != nil {

m.ping("/fail")

return err

}

m.ping("")

return nil

}

func (m *MonitoredJob) ping(endpoint string) {

if m.MonitorURL == "" {

return

}

ctx, cancel := context.WithTimeout(context.Background(), 5*time.Second)

defer cancel()

req, _ := http.NewRequestWithContext(ctx, "GET", m.MonitorURL+endpoint, nil)

resp, err := m.Client.Do(req)

if err != nil {

log.Printf("Ping failed: %v", err)

return

}

defer resp.Body.Close()

}Concurrent Job Safety

When running multiple jobs concurrently, ensure your monitoring doesn't introduce race conditions.

package main

import (

"sync"

"time"

"github.com/robfig/cron/v3"

"myapp/monitor"

)

func main() {

c := cron.New(cron.WithChain(

cron.Recover(cron.DefaultLogger), // Recover from panics

))

// Each job gets its own monitor instance

// No shared state between jobs

c.AddFunc("0 * * * *", func() {

m := monitor.New("MONITOR_JOB_A")

m.Run(jobA)

})

c.AddFunc("0 * * * *", func() {

m := monitor.New("MONITOR_JOB_B")

m.Run(jobB)

})

c.Start()

select {}

}

func jobA() error { return nil }

func jobB() error { return nil }Binary Deployment Considerations

Go compiles to static binaries, which simplifies deployment but requires different approaches than interpreted languages.

Build with version info:

package main

import (

"fmt"

"runtime"

)

var (

version = "dev"

buildTime = "unknown"

)

func main() {

fmt.Printf("Version: %s, Built: %s, Go: %s\n",

version, buildTime, runtime.Version())

// ... rest of main

}Build command:

go build -ldflags "-X main.version=1.2.3 -X main.buildTime=$(date -u +%Y-%m-%dT%H:%M:%SZ)" -o daily-jobCrontab entry:

0 0 * * * MONITOR_URL=https://ping.example.com/abc123 /usr/local/bin/daily-jobComplete Example: Production-Ready Cron Service

Here's a complete example combining all best practices.

package main

import (

"context"

"fmt"

"log"

"net/http"

"net/url"

"os"

"os/signal"

"syscall"

"time"

"github.com/robfig/cron/v3"

)

// Monitor handles job monitoring

type Monitor struct {

url string

client *http.Client

}

func NewMonitor(envVar string) *Monitor {

return &Monitor{

url: os.Getenv(envVar),

client: &http.Client{

Timeout: 10 * time.Second,

},

}

}

func (m *Monitor) ping(endpoint string, params map[string]string) {

if m.url == "" {

return

}

u, err := url.Parse(m.url + endpoint)

if err != nil {

return

}

if params != nil {

q := u.Query()

for k, v := range params {

q.Set(k, v)

}

u.RawQuery = q.Encode()

}

resp, err := m.client.Get(u.String())

if err != nil {

log.Printf("Monitor ping failed: %v", err)

return

}

defer resp.Body.Close()

}

func (m *Monitor) Wrap(fn func() error) func() {

return func() {

start := time.Now()

m.ping("/start", nil)

err := fn()

duration := time.Since(start)

if err != nil {

errMsg := err.Error()

if len(errMsg) > 100 {

errMsg = errMsg[:100]

}

m.ping("/fail", map[string]string{

"error": errMsg,

"duration": duration.String(),

})

log.Printf("Job failed after %v: %v", duration, err)

return

}

m.ping("", map[string]string{

"duration": duration.String(),

})

log.Printf("Job completed in %v", duration)

}

}

func dailyReport() error {

log.Println("Generating daily report...")

time.Sleep(2 * time.Second) // Simulate work

return nil

}

func hourlySync() error {

log.Println("Syncing data...")

time.Sleep(1 * time.Second) // Simulate work

return nil

}

func main() {

log.SetFlags(log.Ldate | log.Ltime | log.Lshortfile)

log.Println("Starting scheduler...")

c := cron.New(

cron.WithLogger(cron.VerbosePrintfLogger(log.Default())),

cron.WithChain(cron.Recover(cron.DefaultLogger)),

)

// Register jobs with monitoring

reportMonitor := NewMonitor("MONITOR_DAILY_REPORT")

syncMonitor := NewMonitor("MONITOR_HOURLY_SYNC")

c.AddFunc("0 0 * * *", reportMonitor.Wrap(dailyReport))

c.AddFunc("0 * * * *", syncMonitor.Wrap(hourlySync))

c.Start()

log.Println("Scheduler started")

// Graceful shutdown

quit := make(chan os.Signal, 1)

signal.Notify(quit, syscall.SIGINT, syscall.SIGTERM)

<-quit

log.Println("Shutting down...")

ctx := c.Stop()

select {

case <-ctx.Done():

log.Println("All jobs completed")

case <-time.After(30 * time.Second):

log.Println("Shutdown timeout")

}

}Quick Reference

Go Scheduling Libraries Comparison

| Library | GitHub Stars | Best For | Monitoring Support |

|---|---|---|---|

| robfig/cron | 14k | Standard cron expressions | Job wrappers, logging |

| go-co-op/gocron | 6.9k | Fluent API, metrics | SchedulerMonitor, gocron-ui |

| time.Ticker | Built-in | Simple intervals | Manual implementation |

Monitoring Best Practices

| Practice | Recommendation |

|---|---|

| HTTP timeout | 10 seconds for pings |

| Ping rate | Max 6 per minute per monitor |

| Grace period | 10-20% longer than job interval |

| Error truncation | Limit to 100 characters |

| Retry attempts | 3-5 with exponential backoff |

Common Failure Modes

| Failure | Detection | Prevention |

|---|---|---|

| Silent crash | Missing heartbeat | Start/finish pings |

| Hung process | Duration exceeded | Context timeout + ping |

| Panic | No completion ping | cron.Recover() wrapper |

| Network timeout | Delayed ping | HTTP client timeout |

Conclusion

Go's simplicity and performance make it excellent for scheduled tasks, but the same traits that make Go binaries reliable also make failures invisible. A crashed binary exits silently; a hung goroutine blocks forever without complaint.

Adding monitoring to Go scheduled tasks is straightforward. Create an HTTP client with appropriate timeouts, signal when jobs start and complete, and handle errors explicitly. The reusable monitor pattern shown here works across standalone binaries and in-process schedulers.

Start with your critical jobs: those that process payments, sync important data, or generate reports. Once those are monitored, expand coverage to maintenance tasks. The few lines of monitoring code will save hours of debugging when jobs inevitably fail.

If you are evaluating monitoring tools for your Go applications, see our cron monitoring pricing comparison and best cron monitoring tools guides.

Ready to monitor your Go scheduled tasks? Cron Crew works with any Go scheduling approach. Create a monitor, set your environment variable, and add a few lines of code for complete visibility into your background jobs.